Before you make any misunderstandings about kops by reading the title, let me stop you there! This is not the comparison of these 2 amazing pieces of software, Kops and EKS Fargate, based on their technical capabilities. I want to share our little story at Vervotech for one of the important steps involved in any DevOps journey i.e. automated infrastructure provisioning across our products and enterprise services deployments.

Little about DevOps at Vervotech

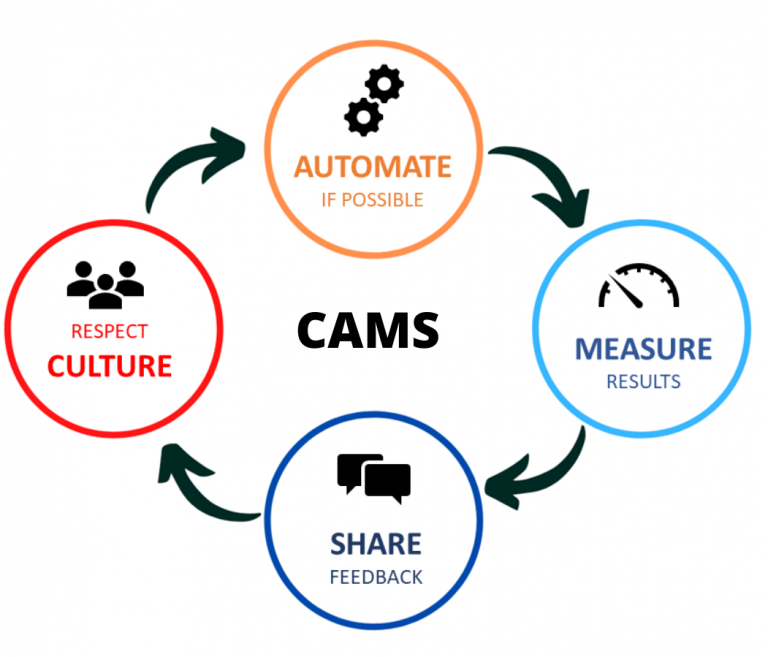

Automation is one of the most important components of the DevOps movement if we look at it from CAMS model perspective. The CAMS stands for Culture, Automation, Measurement, and Sharing. You can read more about the CAMS model here.

Though there are lot of different understandings and execution models around DevOps,

We strongly believe and practice CAMS model for DevOps at Vervotech.

So, in order to achieve more and more automation, automating one of the important areas i.e. infrastructure and all the activities right from defining, deploying, maintaining, and destroying infrastructure becomes very important. And all of that can be achieved by doing “Infrastructure as Code” (IaC).

IaC demands the use of a lot of tooling at multiple stages/areas of the infrastructure automation. And fortunately, there is an amazing suite of tools to cater to these different stages. Here is a very short summary of those stages and well-known tools,

- For server configuration management we can use tools like Chef, Puppet, Ansible, SaltStack.

- The tools like Vagrant, Packer, Docker are for the server templating.

- For orchestration purposes, we can use Kubernetes, Docker Swarm, Nomad, Mesos, etc.

- And finally, for infrastructure provisioning, we can use Terraform, Cloud Formation, Google Deployment Manager, Azure Resource Manager. Few of these provisioning tools run specifically on one cloud provider only.

So, why we decided earlier to use kops on AWS?

The answer is AWS charges $144 per month for using EKS managed service. Yes, you read it correctly, this was the reason we did not use EKS!

Also, the EKS was not mature at the time with the option of using it only for the handful of AWS regions.

Yes, we had the option of Google Kubernetes Engine(GKE) which doesn’t charge you for managed Kubernetes service (excluding worker nodes), but we had some organizational constraints around technology strategy at the time.

In fact, we have another product Vervotech Mappings running on GCP GKE currently.

Then what made us look for a change?

The setup was running fine for a year. But as traffic grew, as, like any other deployment on cloud, the need for more improved infrastructure workflows, once in a while production outages, difficulty in maintaining the existing infrastructure, requests for provisioning new infrastructure, security breaches started piping up which demanded a very prompt, accurate and consistent response from the DevOps automation.

Few of the issues mentioned above are not necessarily because of the kops but definitely because of partial/incomplete infrastructure automation. Since kops takes care of most of the networking and compute resources on the Kubernetes cluster, for other cloud resources we relayed on the ad-hoc scripts and on AWS CLI command scripts directly. This is the area of managing and defining all the cloud resources (apart from kops managed infrastructure) needed more automation.

What have we achieved at the end?

So, we started coding all our cloud resources using Terraform modules including, VPC, EKS, FARGATE profiles, Kubernetes, S3, Route 53, and other managed services. This took us a while for us to build a Terraform configuration because our first attempt was to import all Terraform configuration from our existing kops infrastructure using Terraform import. We scrapped that idea later since we found the importing process is really not straight forward.

It is suggested and great if you start using Terraform configurations from the start of the project in your DevOps journey.

So, we addressed and solved the problems we were facing earlier,

- Apps causing pod as well as cluster outages are moved to EKS FARGATE so that impact is localized and handled by FARGATE.

- We can define, deploy, and maintain the managed AWS services with Terraform configuration files.

- All the Kubernetes cluster logs are centralized to CloudWatch by EKS.

- Improved networking: In the old setup, we were running around 200 pods in the Kubernetes cluster on NodePort services. The way those were deployed, there was manual intervention required in few scenarios like adding target groups manually, manually defining the listener in ALB, manually adding nodes to target groups. All this is gone away since all this is coded Terraform configuration files using the Terraform Kubernetes module and Terraform EKS module.

- Improved auto-scaling with EKS and FARGATE for the apps.

- The issues introduced due to manual configurations are reduced a lot.

- Blue-Green deployments became less cumbersome.

- We can calculate the cost of each pod running in the cluster and build better pricing models for our customers.

Some more learning…

- The Kubernetes cluster upgrades are much simpler with EKS than kops because AWS takes care of most of the upgrade.

- There is a middle way of creating and managing the Kubernetes cluster using both kops and Terraform together, here.

- Our experience of introducing Terraform on already running production infrastructure was not so great and the reason is Terraform does not do a great job in importing your existing infrastructure effectively.

- Definitely, there are some considerations with EKS Fargate which are listed here, but we are good with those for now, and not all our workload is running on EKS Fargate.

- Now, our infrastructure code is version controlled, fast to roll-out, self-serviceable, reused, and documented (because there is no better documentation for the programmer than the code itself, here).

- The cloud-agnostic infrastructure programming and tooling is possible now. Means switching to GCP or Microsoft Azure or any other well-known cloud provider will be relatively much easier.

The idea here was to share the experience and seek any suggestions.

About Vervotech Mappings:

Vervotech Mappings is a leading Hotel Mapping and Room Mapping API that leverages the power of AI and ML to quickly and accurately identify each property listing through the verification of multiple parameters. With one of the industry’s best coverage of 98% and an accuracy of 99.999%, Vervotech Mappings is quickly becoming the mapping software of choice for all leading global companies operating in the travel and hospitality industry. To learn more about Vervotech Mappings and the ways it can enhance your business in the long run contact us: sales@vervotech.com